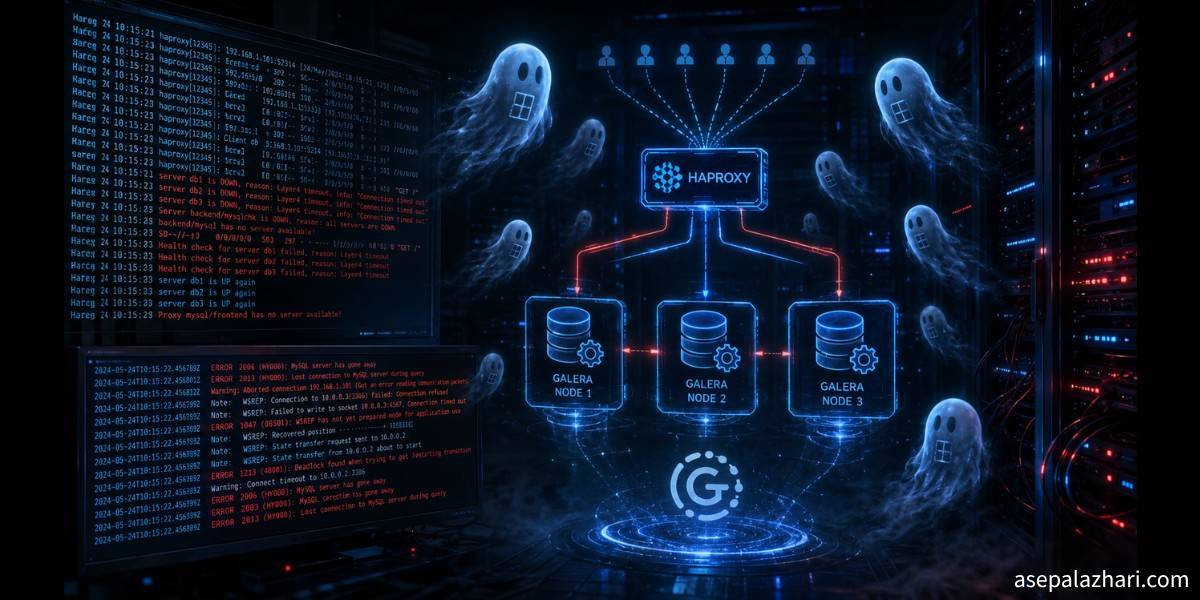

MySQL Gone Away: HAProxy, Galera, and Ghost Processes

At 2 AM, 80% of database connections were failing after migrating to HAProxy. The root cause was 83-day-old ghost processes nobody knew were still running.

At around 2 AM I was staring at a terminal showing something I did not want to see: MySQL server has gone away. A simple loop test against port 3307 returned this:

OK: 4 / FAIL: 1680% failure rate. We had just migrated application traffic to an HAProxy load balancer at one of the largest internet service providers in Indonesia. The database layer was a 3-node Galera cluster running MariaDB. Everything was supposed to work. It did not.

This is the full story of what happened, how I found the root cause, and what I learned after three hours of digging through processes that should have been dead 83 days ago.

The System Before We Touched It

The production environment was built around two Galera clusters behind a single HAProxy server. One cluster handled portal traffic on port 3306. The other handled application traffic on port 3307.

The problem was that the application was not using HAProxy at all. It connected directly to the primary database node, bypassing the load balancer. One reason for that was db-node-3, the third member of the application cluster, had been offline for over 13 months after a crash. Nobody had rejoined it. So the cluster was running 2-of-3, and HAProxy was configured to route to only 2 nodes even if you connected through it.

The topology looked like this:

Application traffic

|

|-- [direct] --> db-node-1:3306 (primary, 100% traffic)

|

`-- db-lb:3307 (HAProxy, unused by apps)

|-- port 3306 --> portal-cluster (3 nodes)

`-- port 3307 --> app-cluster (db-node-1, db-node-2, db-node-3)

Galera cluster (app-cluster):

db-node-1 -- Running, production active, all traffic lands here

db-node-2 -- Synced, available as SST donor

db-node-3 -- OFFLINE, 13+ months, crash survivorThe goal was to fix this properly. Rejoin db-node-3 to the cluster, enable Galera-aware health checks in HAProxy, and migrate the application to use the load balancer.

Phase 1: The Audit Found Two Hidden Problems

Before changing anything, I ran a read-only audit across all four servers. The results on the HAProxy server (db-lb) revealed why “MySQL server has gone away” errors were already happening for some users even before our migration.

Problem one: the timeout configuration was dangerously mismatched.

timeout client 1m (60 seconds)

timeout server 1m (60 seconds)

MySQL wait_timeout = 10 secondsMySQL was closing idle connections after 10 seconds. HAProxy expected them to stay open for 60 seconds. Any application using persistent connections or connection pooling would hold a connection, let it go idle for more than 10 seconds, and the next query would fail with MySQL has gone away.

Problem two: the health check was not Galera-aware.

The existing configuration used option mysql-check user haproxy. That only verifies that MySQL can accept a TCP connection. It does not check the Galera replication state. When a node enters DONOR/DESYNCED state during a State Snapshot Transfer, HAProxy has no idea. It keeps sending writes to that node even though it cannot handle them properly.

The plan: deploy clustercheck on port 9200, switch HAProxy to option httpchk, and fix the timeout values.

Phase 2: Rejoining the Crashed Node

db-node-3 had been offline for 13 months. It was running in standalone mode with wsrep_on=OFF, innodb_force_recovery=1 for read-only recovery, and no grastate.dat file at all.

Without a grastate.dat, Incremental State Transfer is impossible. The node needs a full SST. And the gcache from the other nodes could not hold 13 months of transactions. So the plan was a clean full SST from db-node-2.

The safest way to do this without touching production traffic:

- Force db-node-2 as the SST donor by setting

wsrep_sst_donor=db-node-2on the joiner - Temporarily comment out db-node-2 in HAProxy to prevent traffic during SST

- Clear the data directory on db-node-3 for a fresh SST

Step one: isolate db-node-2 from HAProxy:

sudo sed -i 's/^ server db-node-2/# server db-node-2/' /etc/haproxy/haproxy.cfg

sudo systemctl reload haproxyStep two: fix db-node-3 configuration:

# Remove recovery mode

sudo sed -i '/innodb_force_recovery/d' /etc/my.cnf.d/server.cnf

# Enable Galera replication

sudo sed -i 's/wsrep_on=OFF/wsrep_on=ON/' /etc/my.cnf.d/server.cnf

# Force donor selection — this protects db-node-1 from being chosen

sudo sed -i '/^wsrep_on=ON/a wsrep_sst_donor=db-node-2' /etc/my.cnf.d/server.cnfStep three: verify directory contents, then clear it for SST:

# Always verify before running a destructive command

ls -la /var/lib/mysql/

sudo systemctl stop mariadb

sudo rm -rf /var/lib/mysql/*

sudo chown mysql:mysql /var/lib/mysql

sudo chmod 750 /var/lib/mysql

sudo systemctl start mariadbI had estimated the SST would take 30 to 90 minutes based on roughly 227GB of disk usage on that server. It finished in minutes. Only about 28GB of actual MySQL data was transferred. The rest of the disk space was OS files, logs, and other non-MySQL content. rsync only moves actual database files.

The Galera log confirmed success:

[Note] WSREP: Shifting JOINER -> JOINED

[Note] WSREP: Shifting JOINED -> SYNCEDAll three nodes:

wsrep_cluster_size: 3

wsrep_cluster_status: Primary

wsrep_local_state_comment: SyncedPhase 3: Making HAProxy Galera-Aware

The standard solution for Galera-aware HAProxy health checks is clustercheck. It is a shell script that runs as an xinetd service on port 9200. It queries the local MySQL node for wsrep_local_state and returns HTTP 200 only if the value is 4, which means Synced. If the node is in any other state including Donor or Desynced, it returns HTTP 503.

There was one complication. These servers were running CentOS 7.6 (end of life) with MariaDB 10.1 (also end of life). Most package repositories were dead. On db-node-1, I could reach a mirror with the xinetd package. On db-node-2 and db-node-3, DNS could not even resolve the mirror list.

The workaround was to download the RPM on the one node that had access and transfer it:

# On db-node-1: download without installing

yumdownloader xinetd --disablerepo=mariadb --destdir=/tmp/

# Transfer to the others

scp /tmp/xinetd-2.3.15-14.el7.x86_64.rpm user@db-node-2:/tmp/

scp /tmp/xinetd-2.3.15-14.el7.x86_64.rpm user@db-node-3:/tmp/

# On db-node-2 and db-node-3: install from local file

sudo rpm -ivh /tmp/xinetd-2.3.15-14.el7.x86_64.rpmAfter deploying the clustercheck script and xinetd config on all three nodes, I created the MySQL user it needs (created once on db-node-1, replicated automatically to db-node-2 and db-node-3 via Galera):

CREATE USER IF NOT EXISTS 'clustercheck'@'localhost' IDENTIFIED BY 'clustercheckpassword';

GRANT USAGE ON *.* TO 'clustercheck'@'localhost';

FLUSH PRIVILEGES;Verification from the HAProxy server:

curl -s -o /dev/null -w "%{http_code}" http://db-node-1:9200 # 200

curl -s -o /dev/null -w "%{http_code}" http://db-node-2:9200 # 200

curl -s -o /dev/null -w "%{http_code}" http://db-node-3:9200 # 200All three returned 200. Then I updated HAProxy with the final configuration:

defaults

mode tcp

timeout client 28800s

timeout server 28800s

timeout connect 5s

timeout check 10s

backend app-cluster

balance leastconn

option tcpka

option httpchk GET /

server db-node-1 db-node-1:3306 check port 9200 inter 5s rise 2 fall 3 weight 1

server db-node-2 db-node-2:3306 check port 9200 inter 5s rise 2 fall 3 weight 1

server db-node-3 db-node-3:3306 check port 9200 inter 5s rise 2 fall 3 weight 1After reload, the HAProxy stats page showed L7OK/200 for all three nodes. Phase 2 and 3 done. I felt good about this.

Also Read: Kubernetes Cluster Down After Reboot: A Full Postmortem

Phase 4: The Mystery Failure

The next day, we migrated a test application to use db-lb:3307 instead of the direct connection. Within minutes, errors appeared. Running a connection test loop:

ok=0; fail=0

for i in $(seq 1 20); do

result=$(mysql -hdb-lb -P3307 --connect-timeout=3 2>&1 | head -1)

if echo "$result" | grep -q '1045'; then

ok=$((ok+1))

else

fail=$((fail+1))

fi

done

echo "OK: $ok / FAIL: $fail"Output: OK: 4 / FAIL: 16

Direct connections to each MySQL node individually worked fine. HAProxy was rejecting connections. But I had just fixed HAProxy. How?

Finding the Ghost Processes

The answer was in a single command:

ps aux | grep haproxy | grep -v grepOutput:

haproxy 10787 Feb11 437:57 haproxy -f haproxy.cfg

haproxy 10788 Feb11 93:13 haproxy -f haproxy.cfg

haproxy 10789 Feb11 155:41 haproxy -f haproxy.cfg

haproxy 10790 Feb11 266:49 haproxy -f haproxy.cfg

haproxy 3094 May06 00:08 /usr/sbin/haproxy -Ds -f /etc/haproxy/haproxy.cfg -sf 592

haproxy 3095 May06 00:08 /usr/sbin/haproxy -Ds -f /etc/haproxy/haproxy.cfg -sf 592Four processes started on February 11. Eighty-three days ago. Still running.

The history: HAProxy was originally started manually with haproxy -f haproxy.cfg, not via systemd. Those four processes were never registered in systemd. Every time someone ran systemctl reload haproxy or systemctl restart haproxy, systemd managed only the processes it knew about. The manually-started processes (PIDs 10787 through 10790) received no signals and kept running untouched with the old configuration.

The old configuration had option mysql-check instead of option httpchk. It had db-node-3 commented out. It had no clustercheck. It could not find any healthy backend for port 3307 because the health check method was now incompatible with how the backends were configured.

With nbproc 4, HAProxy runs 4 worker processes. Every generation adds 4 more listeners on the same port. Port 3307 now had 8 processes listening: 4 with the broken old config and 4 with the correct new config. The kernel distributed incoming connections across all 8 in round-robin. 4 out of 8 processes immediately rejected connections with no valid backend. Hence approximately 80% failure.

The fix was one command:

sudo kill 10787 10788 10789 10790Test result after:

OK: 20 / FAIL: 0Zero failures.

Root Cause 2: The wait_timeout Problem

After killing the ghost processes, intermittent errors were still appearing. Investigation showed that db-node-2 and db-node-3 had wait_timeout = 10, while db-node-1 had wait_timeout = 28800.

MySQL closes idle connections after wait_timeout seconds. 10 seconds is extremely aggressive for any application using connection pooling. A PHP-FPM worker that holds a connection between requests will find that connection dead after just 10 idle seconds. The next query returns “MySQL server has gone away”.

With balance leastconn, HAProxy distributed connections across all three nodes. Connections landing on db-node-2 or db-node-3 would expire after 10 seconds of inactivity.

Fix on both nodes:

SET GLOBAL wait_timeout = 28800;

SET GLOBAL interactive_timeout = 28800;And make it persistent:

# /etc/my.cnf.d/server.cnf

wait_timeout = 28800

interactive_timeout = 28800After both fixes, the error rate stayed at zero.

Also Read: Fixing RabbitMQ 4.x Connection: amqplib to CloudAMQP

What I Learned

Never start HAProxy manually on a server managed by systemd. If you do, systemctl restart will not kill those processes. They will keep running indefinitely with whatever configuration they had at startup. After every reload or restart, always verify:

# Check all process start times — no two should differ by days

ps aux | grep haproxy | grep -v grep | grep -v wrapperUse option httpchk with clustercheck for Galera clusters, not option mysql-check. TCP-level checks cannot detect nodes in DONOR or DESYNCED state. A node can accept a TCP connection and still be unable to handle writes.

Synchronize your timeouts. MySQL wait_timeout and HAProxy timeout client/timeout server must be aligned. A common approach is to match HAProxy timeouts to the MySQL wait_timeout value.

SST duration does not scale with total disk usage. rsync during SST only transfers actual MySQL data files. A server with 227GB of total disk usage might only have 28GB of actual database files to transfer.

Always verify directory contents before running rm -rf on a database data directory. One wrong path on a production server ends very badly. Ask yourself what is in that directory before removing it.

Also Read: Kubernetes Logging Done Right: Fluent Bit to Elasticsearch

The Final Configuration That Worked

defaults

mode tcp

timeout client 28800s

timeout server 28800s

timeout connect 5s

timeout check 10s

backend app-cluster

balance leastconn

option tcpka

option httpchk GET /

server db-node-1 db-node-1:3306 check port 9200 inter 5s rise 2 fall 3 weight 1

server db-node-2 db-node-2:3306 check port 9200 inter 5s rise 2 fall 3 weight 1

server db-node-3 db-node-3:3306 check port 9200 inter 5s rise 2 fall 3 weight 1# MySQL on all nodes

wait_timeout = 28800

interactive_timeout = 28800And one preventive checklist after every HAProxy configuration change:

# Verify no ghost processes exist

ps aux | grep haproxy | grep -v grep | grep -v wrapper

# Test 20 connections and measure failure rate

ok=0; fail=0

for i in $(seq 1 20); do

result=$(mysql -hdb-lb -P3307 --connect-timeout=3 2>&1 | head -1)

if echo "$result" | grep -q '1045'; then ok=$((ok+1)); else fail=$((fail+1)); fi

done

echo "OK: $ok / FAIL: $fail"

# Check HAProxy stats for L7OK/200 on all backends

curl -s 'http://db-lb:9000/;csv' | grep app-cluster | awk -F',' '{print $2, $18, $19}'A single kill command resolved 80% of the failures. Proper timeout alignment resolved the rest. The underlying cause of the original “MySQL has gone away” complaints had been sitting in the system for months: an HAProxy health check that could not speak Galera and timeouts that were mismatched by a factor of 2,880. Taking time to audit before acting, and doing a proper postmortem when things fail, is the difference between resolving incidents quickly and chasing them for weeks.